[AWS] S3Bucket - MFT + Terraform Project 06

Manage file Transfer to/from S3Bucket (IAM Role/Bucket Policy)

Inception

Hello everyone, This article is part of The Terraform + AWS series, And it's not depend on the previous articles, I use this series to publish out Projects & Knowledge.

Overview

Hello Gurus, 𝑴𝒂𝒏𝒂𝒈𝒆 𝑭𝒊𝒍𝒆 𝑻𝒓𝒂𝒏𝒔𝒇𝒆𝒓, Streamlining Data Flows with Security and Reliability, In today's data-driven world, businesses and organizations frequently need to move files reliably between systems, partners, and Organizations. Managed file transfer (MFT) solutions offer a way to streamline these processes. However, we going to provide our own solution here using 𝑺3𝑩𝒖𝒌𝒄𝒆𝒕, S3 Bucket policy, IAM User, IAM Role, 𝒔3𝒂𝒑𝒊 𝒄𝒐𝒎𝒎𝒂𝒏𝒅.

Today's Example will use S3-Bukcet as a centralize object storage, to send and receive files using IAM user, IAM Role, S3 Bucket policy.

Building-up Steps

Today will Build up an S3 Bucket, S3 bucket policies, IAM Users, IAM Role, in order allow IAM users to send/receive files to/from The S3, The Infrastructure will build-up Using 𝑻𝒆𝒓𝒓𝒂𝒇𝒐𝒓𝒎.✨

The Architecture Design Diagram:

building-up steps Details:

Create an S3 Bucket.

Enable ACL as "private"

Set objects ownership as "ObjectWriter"

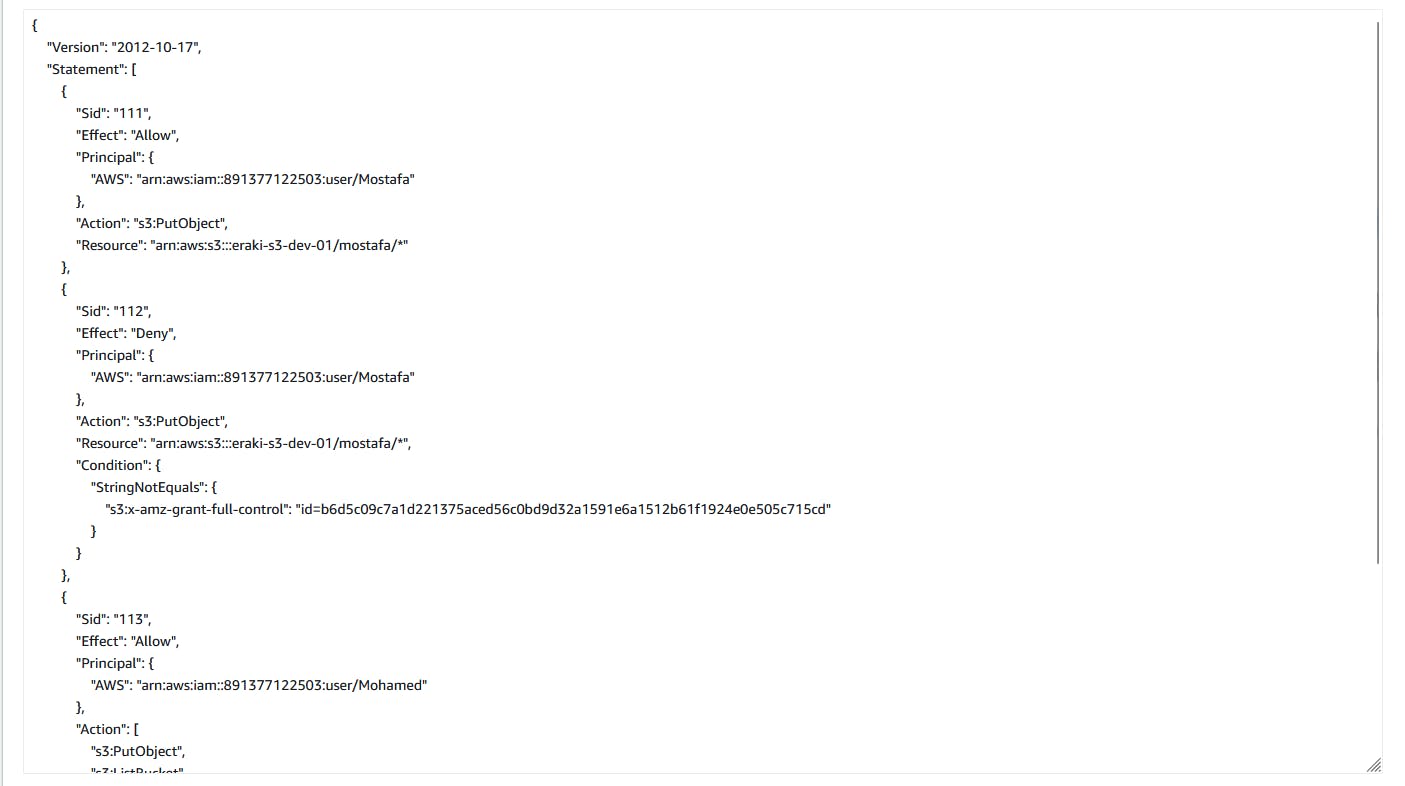

Create Bucket policies:

Allow IAM user "mohamed" to put, get, delete, and list permissions on the entire S3 resource.

Allow IAM user "mostafa" to put objects under

mostafa/*path, and ensure that mostafa cannot own the object.While enabling the ACL as "ObjectWriter" The uploaded object owner will set to mostafa, Therefore the bucket owner cannot grant another user permission on this object, and this inconsistent with our case.

So, Will ensure the object owner (i.e. mostafa) will set the object owner to the bucket owner by deny uploading until mostafa user specify the canonical ID for the bucket owner using--grand-full-controlargument (i.e. will discover this in below steps.)

Create IAM user "Mohamed"

Create IAM user "Mostafa"

Create IAM user "Taha"

Create an IAM Role for Taha user That allow him to get from

mostafa/*path.Consider Taha user as an external access (i.e. not an internal member)

Therefore, Will provide him temp access using IAM Role.

enough talking, let's go forward...😉

Clone The Project Code

Create a clone to your local device as the following:

pushd ~ # Change Directory

git clone https://github.com/Mohamed-Eleraki/terraform.git

pushd ~/terraform/AWS_Demo/09-S3BucketPolicy03

- open in a VS Code, or any editor you like

code . # open the current path into VS Code.

Terraform Resources + Code Steps

Once you opened the code into your editor, will notice that the resources have been created. However will discover together how Create them steps by step.

Create an S3 Bukcet

- Create a new file called

s3.tf

.tf files is a good way for less code complexity.- Create The S3 Bucket Resources as the below

# Configure aws provider

terraform {

required_providers {

aws = {

source = "hashicorp/aws"

version = "~> 5.0"

}

}

}

# Configure aws provider

provider "aws" {

region = "us-east-1"

profile = "eraki" # force destroy even if the bucket not empty

}

# Create S3 Bucket

resource "aws_s3_bucket" "s3_01" {

bucket = "eraki-s3-dev-01"

force_destroy = true # force destroy even if the bucket not empty

object_lock_enabled = false

tags = {

Name = "eraki-s3-dev-01-Tag"

Environment = "Dev"

}

}

# Set the ownership of objects to the object writer

resource "aws_s3_bucket_ownership_controls" "ownership_s3_01" {

bucket = aws_s3_bucket.s3_01.id

rule {

#object_ownership = "BucketOwnerPreferred"

object_ownership = "ObjectWriter"

}

}

# Enable ACL as private

resource "aws_s3_bucket_acl" "acl_s3_01" {

depends_on = [aws_s3_bucket_ownership_controls.ownership_s3_01]

bucket = aws_s3_bucket.s3_01.id

acl = "private"

}

# Create bucket policy by mentioning the policy below

resource "aws_s3_bucket_policy" "Policy_s3_01" {

bucket = aws_s3_bucket.s3_01.id

policy = data.aws_iam_policy_document.policy_document_s3_01.json

}

# Fetch the Bucket Owner canonical id

data "aws_canonical_user_id" "current_user" {}

# Create policy document

data "aws_iam_policy_document" "policy_document_s3_01" {

statement {

sid = "111"

effect = "Allow"

principals {

type = "AWS"

identifiers = [ "${aws_iam_user.Mostafa.arn}" ]

}

actions = [

"s3:PutObject"

]

resources = [

"${aws_s3_bucket.s3_01.arn}/mostafa/*"

]

}

statement {

sid = "112"

effect = "Deny"

principals {

type = "AWS"

identifiers = [ "${aws_iam_user.Mostafa.arn}" ]

}

actions = [

"s3:PutObject"

]

resources = [

"${aws_s3_bucket.s3_01.arn}/mostafa/*"

]

condition {

test = "StringNotEquals"

variable = "s3:x-amz-grant-full-control"

values = [ "id=${data.aws_canonical_user_id.current_user.id}" ]

}

}

statement {

sid = "113"

effect = "Allow"

principals {

type = "AWS"

identifiers = [ "${aws_iam_user.Mohamed.arn}" ]

}

actions = [

"s3:PutObject",

"s3:GetObject",

"s3:DeleteObject",

"s3:ListBucket"

]

resources = [

aws_s3_bucket.s3_01.arn,

"${aws_s3_bucket.s3_01.arn}/*"

]

}

}

# Create S3 objects (i.e directories)

resource "aws_s3_object" "directory_object_s3_01_mostafa" {

bucket = aws_s3_bucket.s3_01.id

key = "mostafa/"

content_type = "application/x-directoy"

}

Create IAM User

Create a new file called

iam.tfCreate The IAM user Resource as the below

resource "aws_iam_user" "Mohamed" {

name = "Mohamed"

}

resource "aws_iam_user" "Taha" { # (i.e. Consider it as external access)

name = "Taha"

}

resource "aws_iam_user" "Mostafa" {

name = "Mostafa"

}

Create IAM Role

Append into iam.tf as below

# Create IAM Role for Taha - (i.e. Consider it as external access)

resource "aws_iam_role" "iam_role_get_s3_taha" {

name = "s3_get_access_role"

max_session_duration = 3600 # Set The Maximum session duration (in seconds) to 60 MIN

assume_role_policy = <<EOF

{

"Version": "2012-10-17",

"Statement": [

{

"Sid": "114",

"Effect": "Allow",

"Action": "sts:AssumeRole",

"Principal": {

"AWS": "${aws_iam_user.Taha.arn}"

}

}

]

}

EOF

}

# Create S3 get policy document

data "aws_iam_policy_document" "s3_get_access_policy_document" {

statement {

sid = "115"

effect = "Allow"

actions = [

"s3:GetObject"

]

resources = [

"${aws_s3_bucket.s3_01.arn}/mostafa/*"

]

}

}

# Creaet policy just to hold the document created

resource "aws_iam_policy" "holds_s3_get_policy" {

name = "holds_s3_get_policy"

policy = data.aws_iam_policy_document.s3_get_access_policy_document.json

}

# attche s3 get policy to the role

resource "aws_iam_role_policy_attachment" "attach_s3_get_role" {

role = aws_iam_role.iam_role_get_s3_taha.name

policy_arn = aws_iam_policy.holds_s3_get_policy.arn

}

Apply Terraform Code

After configured your Terraform Code, It's The exciting time to apply the code and just view it become to Real. 😍

- First the First, Let's make our code cleaner by:

terraform fmt

- Plan is always a good practice (Or even just apply 😁)

terraform plan

- Let's apply, If there's No Errors appear and you're agree with the build resources

terraform apply -auto-approve

Check S3 File Steaming

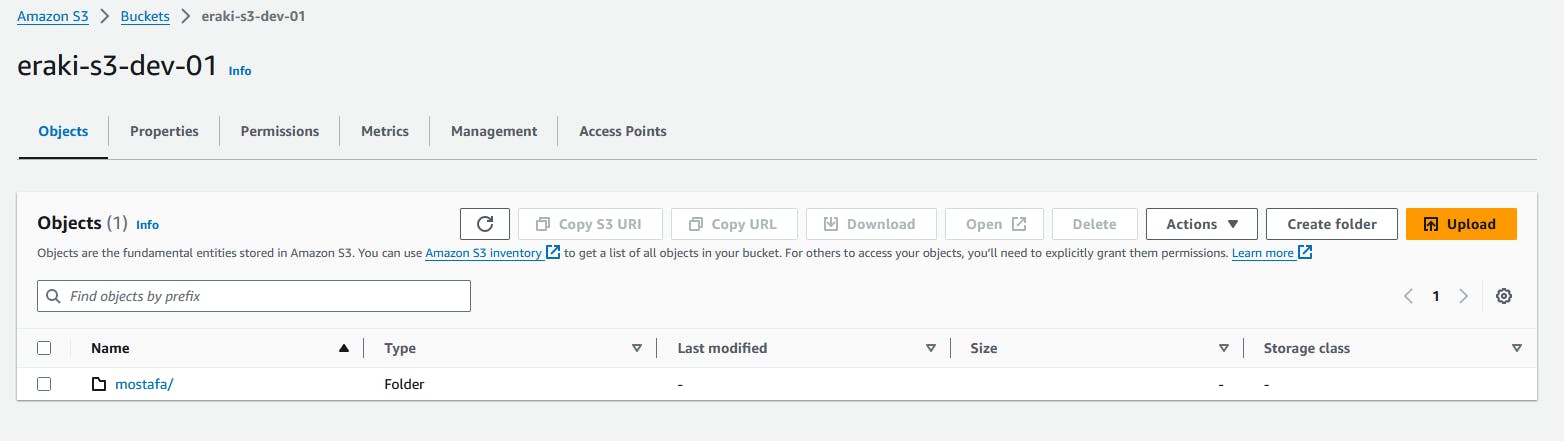

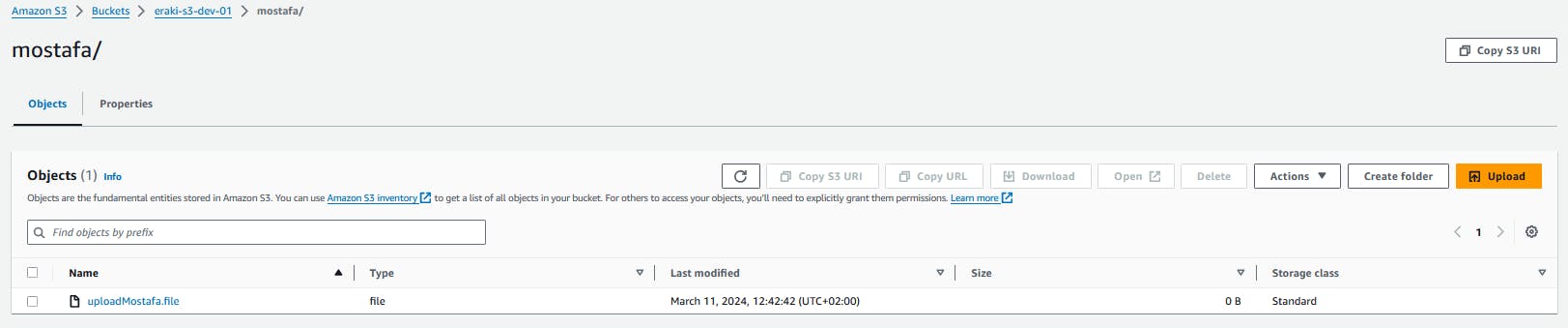

Check the bucket objects, should have mostafa directory

Check bucket policy

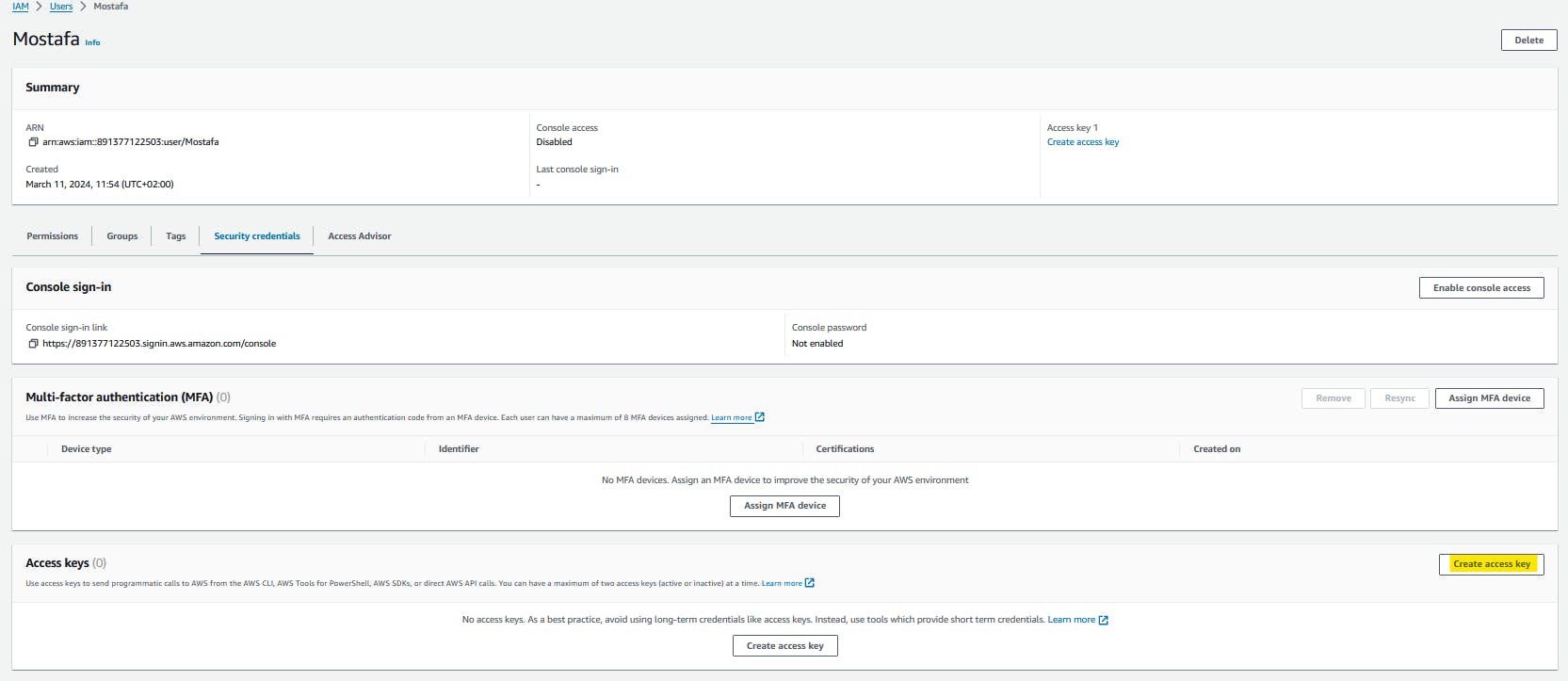

Check mostafa user permissions.

Let's try put an object using mostafa programmatic access

- Create CLI Access key for mostafa user and download the CSV file

Open up your terminal

Check AWSCLI is installed

aws --version

if not installed follow up this Doc:docs.aws.amazon.com/cli/latest/userguide/ge..

- Configure mostafa credentials

aws configure --profile mostafa

# past the value from CSV file

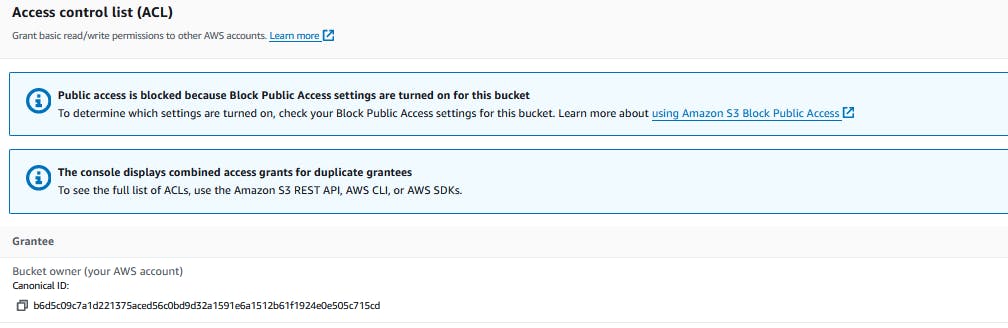

Copy the canonical ID of the S3 Bucket

- open The S3-bucket/permissions under Access control list (ACL)

- Put an object into /mostafa Dir using the copied Canonical ID

touch uploadMostafa.file

aws s3api put-object --bucket eraki-s3-dev-01 --key mostafa/uploadMostafa.file --body uploadMostafa.file --grant-full-control id="PastCanoincalIDHere" --profile mostafa

The object should be uploaded

Check the object

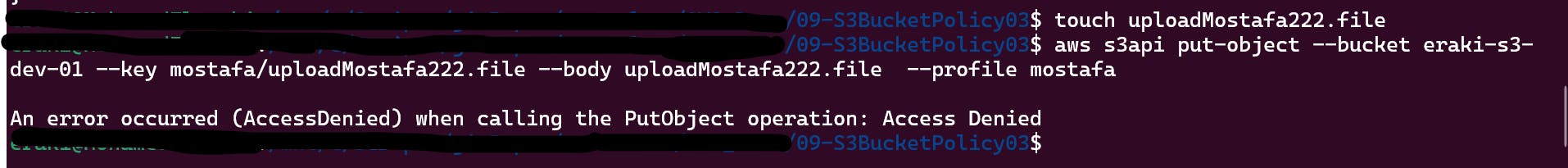

If we tried to put an object without using the Canonical ID will provide Access Denied

--grant-full-control argument provide the object owner for the bucket owner.Check Taha user permissions.

Create CLI Access key for taha user and download the CSV file as the previous steps.

Open up your terminal

Configure aws profile

aws configure --profile taha

# past the value from CSV file

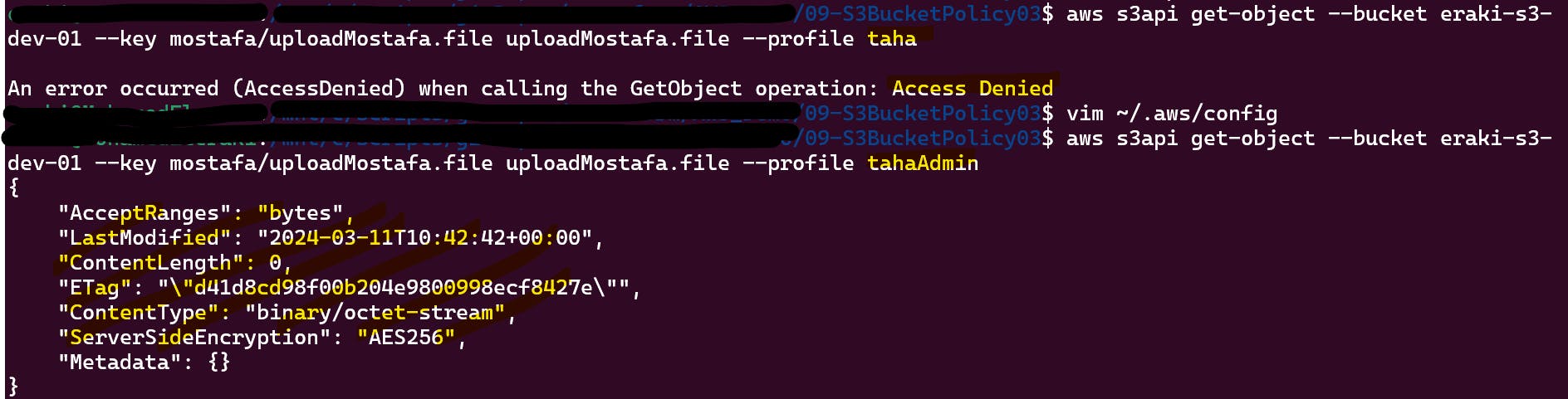

Taha has permission to get from /mostafa path using IAM Role, So we need to configure taha profile to use the associated role first.

- Open-up

~/.aws/configthen append the content below

- Open-up

[profile TahaAdmin]

role_arn = <Role ARN>

source_profile = taha

- get the file from mostafa dir using the tahaAdmin role

rm -rf uploadMostafa* # delete the uploaded fild

aws s3api get-object --bucket eraki-s3-dev-01 --key mostafa/uploadMostafa.file uploadMostafa.file --profile tahaAdmin

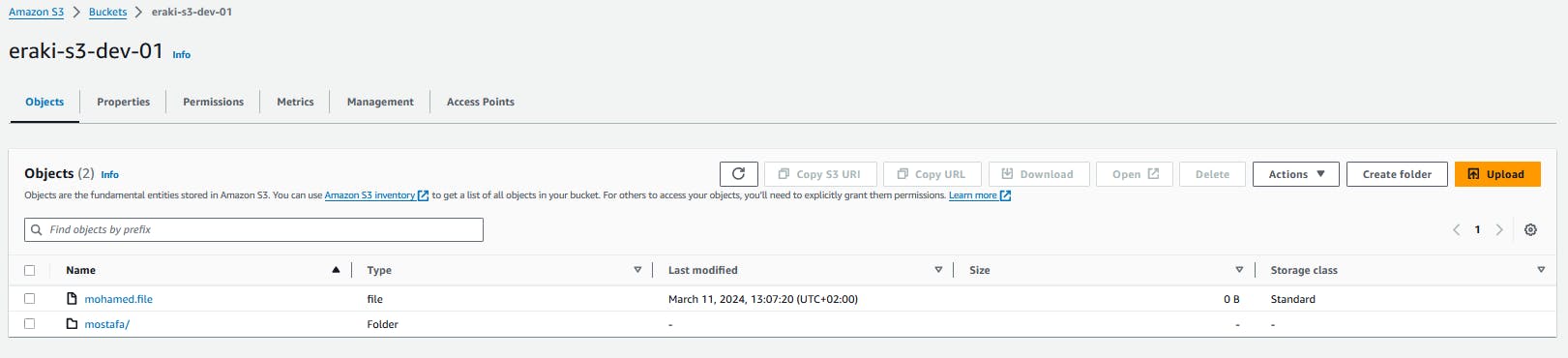

Check Mohamed user permissions.

Mohamed have Wide permission

Create CLI Access key for mohamed user and download the CSV file as the previous steps.

Open up your terminal

Configure aws profile

aws configure --profile mohamed

# past the value from CSV file

- try to upload an object

touch mohamed.file

aws s3api put-object --bucket eraki-s3-dev-01 --key mohamed.file --body mohamed.file --profile mohamed

- try to get the object from

mostafa/path

aws s3api get-object --bucket eraki-s3-dev-01 --key mostafa/uploadMostafa.file uploadMostafa.file --profile mohamed

Destroy environment

The Destroy using terraform is very simple, However we should first destroy the Access keys.

Delete all key's Created for all users.

Then, Destroy all resources using terraform

terraform destroy -auto-approve

Conclusion

By integrating With S3, organizations can gain a number of benefits, including improved data security, simplified data management, and increased operational efficiency. S3 provides a secure and scalable storage solution for MFT files, Besides the AWS CLI s3api command .

That's it, Very straightforward, very fast🚀. Hope this article inspired you and will appreciate your feedback. Thank you.